Evolution of PaaS

technical kubernetes policy iaas paasEvolution of Platform as a Service

Overview

Three well known cloud service models are:

- IaaS: Infrastructure as a Service

- PaaS: Platform as a Service

- SaaS: Software as a Service

IaaS and SaaS are well understood models. Though as linux containers go mainstream, the PaaS model is ever evolving.

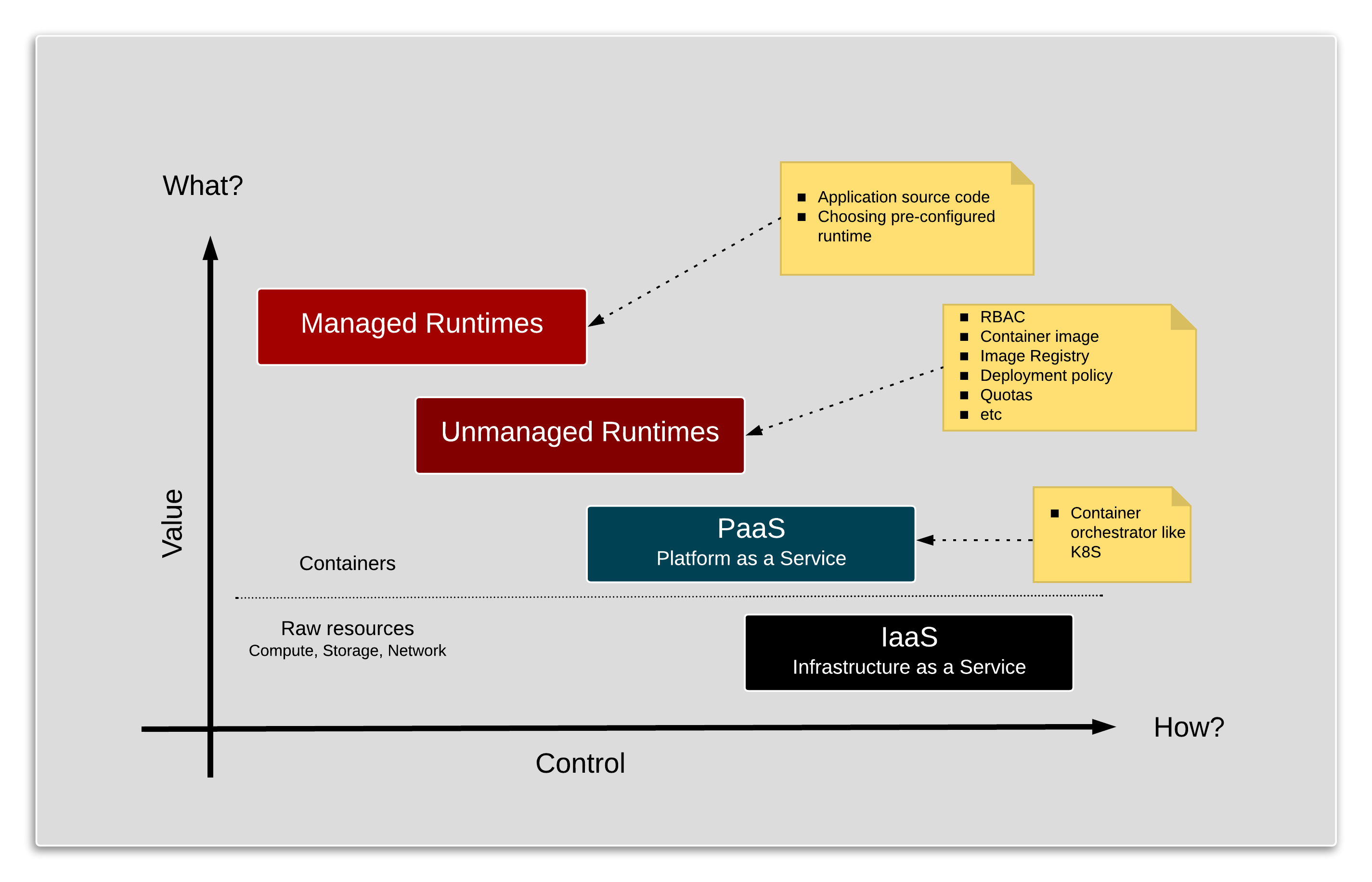

PaaS provides a wide spectrum of configuration points for customers to design their services. As an example, in a true function as a service model1 the customer could simply provide source code to perform a task when a certain condition is triggered. At the other end of the spectrum, the customer might want to design a service bottom up. Meaning piecing together all the building blocks to define how the service is constructed.

So at one end of the spectrum, you are dealing simply with what task needs to be performed. While at the other end of the spectrum, you are dealing with not only what but also how that service is technically built, which in turn requires that the service is appropriately delivered and life-cycle managed.

Value vs. Control in PaaS

As highlighted by the picture above, as higher up the stack you bind your application, lesser the amount of techincal debt you will incur and carry forward. In turn you have less control of how. It would seem that this trade-off is well understood (may be apprecaited?) but often overlooked2.

As highlighted by the picture above, as higher up the stack you bind your application, lesser the amount of techincal debt you will incur and carry forward. In turn you have less control of how. It would seem that this trade-off is well understood (may be apprecaited?) but often overlooked2.

Even with the obvious value being derived higer up the stack, the development community generally prefers to work with the PaaS layer if not IaaS.

Looking back, adoption of IaaS (EC2, Google Compute Engine) was fastracked by developers. As it provided developers with a sense of being in control of their (project’s) destiny. The key enabling features of this model were:

- Self-service capability

- Backed by an automated delivery of the service or resources

This capability compared to the concierge style of service offered by traditional IT shops made IaaS service providers extremely popular with developers. As lead times of days and weeks if not months required by traditional IT shops to provision environments wasn’t uncommon.

The PaaS Layer

Now as containers are becoming popular with developers, the true PaaS model is becoming popular with the developers. Most common technology used in implementing this PaaS model is Kubernetes. Popular examples of this service:

- GKE: Google Kubernetes Engine

- AKS: Azure Kubernetes Service

- EKS: Amazon Elastic Container Service for Kubernetes

- Red Hat OpenShift Online

- DIY

Even in this model, the developer community is really treating PaaS as if it was working with IaaS. As an example, to make it enterprise ready, raw Kubernetes requires scafolding around it with features like:

- RBAC strategy

- Storage options

- Container Image Registry

- Deployment policies

- Life-cycle management of running containers

- Tooling for CI/CD pipelines

This pattern places enormous faith and responsiblity on the developers to consistently make the right choice at every decision point.

As an example consider all the decisions that would influence the supportability matrix for all the applications in the enterprise. Below is just a small snapshot of this example.

| Application name | Kubernetes version | RBAC integration | Block Storage | File Storage | Deployment rules |

|---|---|---|---|---|---|

| ATM locator | 1.11 | None | Persistent Disk SSD (Google) | Google Drive | No access to customer data |

| Branch locator | 1.10 | Node | Premium Disk (Azure) | Azure Files | No access to customer data |

| Loan approval | 1.9 | Active Directory | Pure Storage | Gluster File System | No access to DMZ |

In addition horizontal concerns like detecting, isolating and re-cycling of vulnerable containers is an abosolute must from a security perspective.

As you can imagine, majority of these capabilities are required to deliver enterprise services by any project. So either each project invests in building and managing their own scafolding or these capabilities are provided via centralised Unmanaged runtime as a service model.

The Unmanaged Runtime Layer

This particular layer focuses on providing standard and consistent container services across the enterprise; including build delivery and life-cycle management of individual containers.

This allows the developers to focus on runtimes required to build applications. The runtimes in this instance are the programming languages, frameworks, databases, etc.

This is a good starting point for startups and new enterprises. But very soon a few consistent runtimes emerge, which are leveraged by the developers time and again.

The Managed Layer

There is a case to be made that even these runtimes are managed in a standard and consistent manner.

Choosing and managing a runtime in container could be an involved process. For instance consider the example below.

Example

Optional

This example is meant as an illustration for technically minded people to help understand how some of the technical decisions made could have an impact in the longer run.

The OS matters

At present, the size of containers is very topical. Especially developers are very keen to use small container. Lets take an example of a simple c program below.

/*

* File: hello_world.c

* Simple program that prints 'Hello World' message

*/

#include <stdio.h>

int main(){

printf("\t===========\n");

printf("\tHello World\n");

printf("\t===========\n");

return 0;

}

Compile the code using following command.

cc hello_world.c -o hello_world

This will create an executable called hello_world. Executing it gives you the below output.

===========

Hello World

===========

Fedora based container

Build a fedora based container image using the following ‘Dockerfile_fedora’

FROM fedora:27

RUN dnf -y clean all && dnf -y update

COPY hello_world /tmp/hello_world

Built the image and run the ‘c’ program within the container.

# Build the docker image

docker build -t fedora-4-c -f Dockerfile_fedora .

# Run the container

docker run fedora-4-c /tmp/hello_world

Gives us the expected output:

===========

Hello World

===========

Debian based container

Build a debian based container image using the following ‘Dockerfile_debian’

FROM debian:8

RUN apt-get update

COPY hello_world /tmp/hello_world

Built the image and run the ‘c’ program within the container.

# Build the docker image

docker build -t debian-4-c -f Dockerfile_debian .

# Run the container

docker run debian-4-c /tmp/hello_world

Gives us the expected output:

===========

Hello World

===========

Alpine based container

Build an alpine based container image using the following ‘Dockerfile_alpine’

FROM gliderlabs/alpine:latest

COPY hello_world /tmp/hello_world

Built the image and run the ‘c’ program within the container.

# Build the docker image

docker build -t alpine-4-c -f Dockerfile_alpine .

# Run the container

docker run alpine-4-c /tmp/hello_world

Running this container results in an Error!

Gives us the following error message:

standard_init_linux.go:178: exec user process caused "no such file or directory"

Commercial Perspective

Anecdotal data suggests that 80% of the containerised applications are stateless in nature. And if running in public cloud, these applications could potentially be a significant consumer of resources. Now if we think of public cloud providers as providers of utility services, then we should be able to tap into the best available rates and service.

In that scenario, it is critical to develop applications higher up the stack than raw Kubernetes. And we do that by using a common deployment platform across internal and the three public cloud (EC2, Google Cloud, Azure) providers.

-

Function as a service (FaaS) is a category of cloud computing services that provides a platform allowing customers to develop, run, and manage application functionalities without the complexity of building and maintaining the infrastructure typically associated with developing and launching an app. ↩

-

In 2008, Google launched its cloud service with AppEngine only. Eric Schmidt, then Google CEO says - “There’s something fundamentally wrong with what we were doing in 2008, we didn’t get the right stepping stones in to the cloud.”. Here is the full news article, which is worth reading. ↩